Tests, winners, or value. Which would you sacrifice?

Imagine a world where every experiment was quick to build, high-quality, and delivered a treasure trove of learnings as well as a big uplift. Sadly, the reality is that most testing programmes face complexity, limitations, and trade-offs between value and velocity.

So when it comes down to picking which ideas get tested and what trade-offs you’re willing to make, how do you decide?

Here’s a scenario to consider:

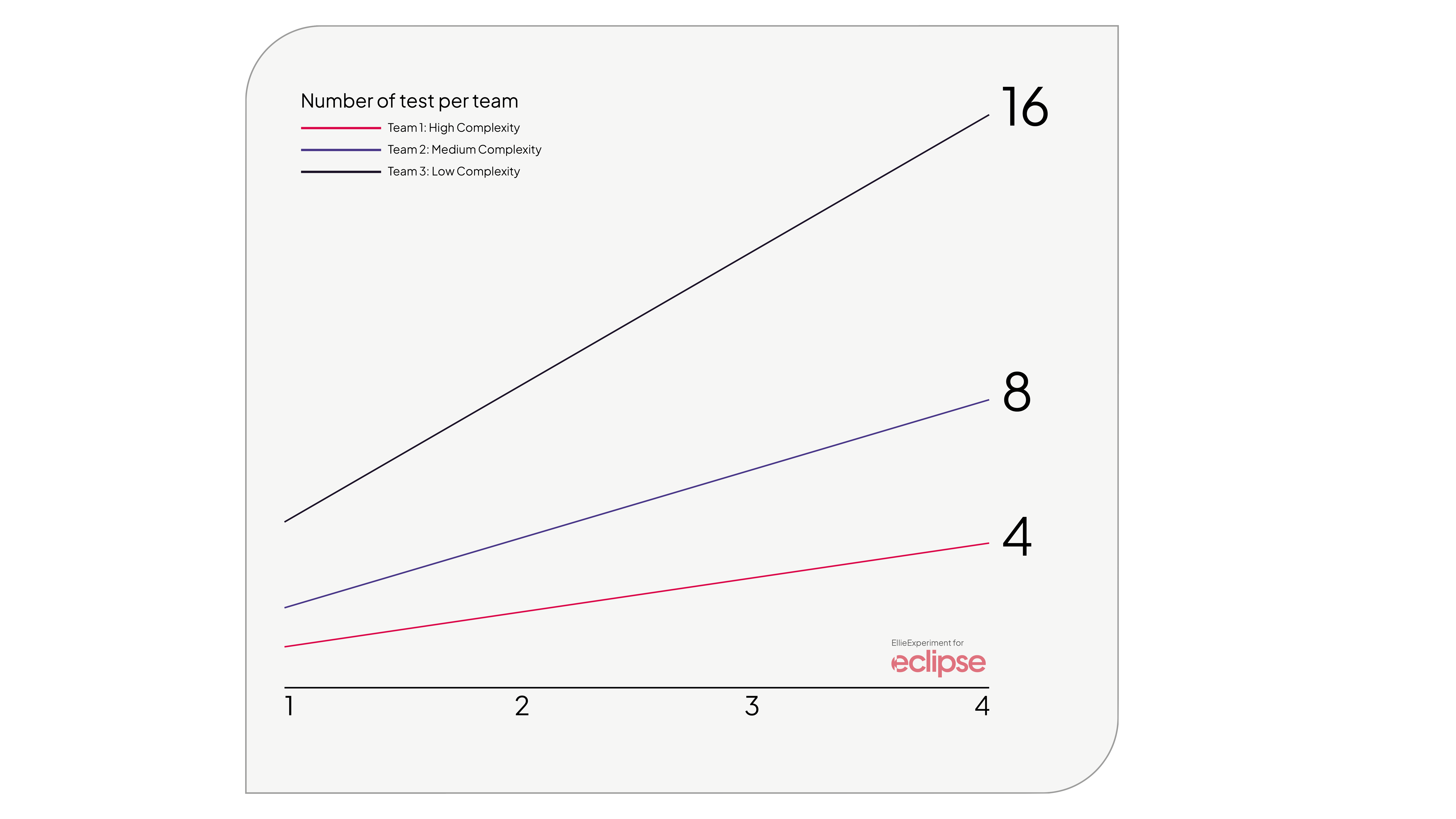

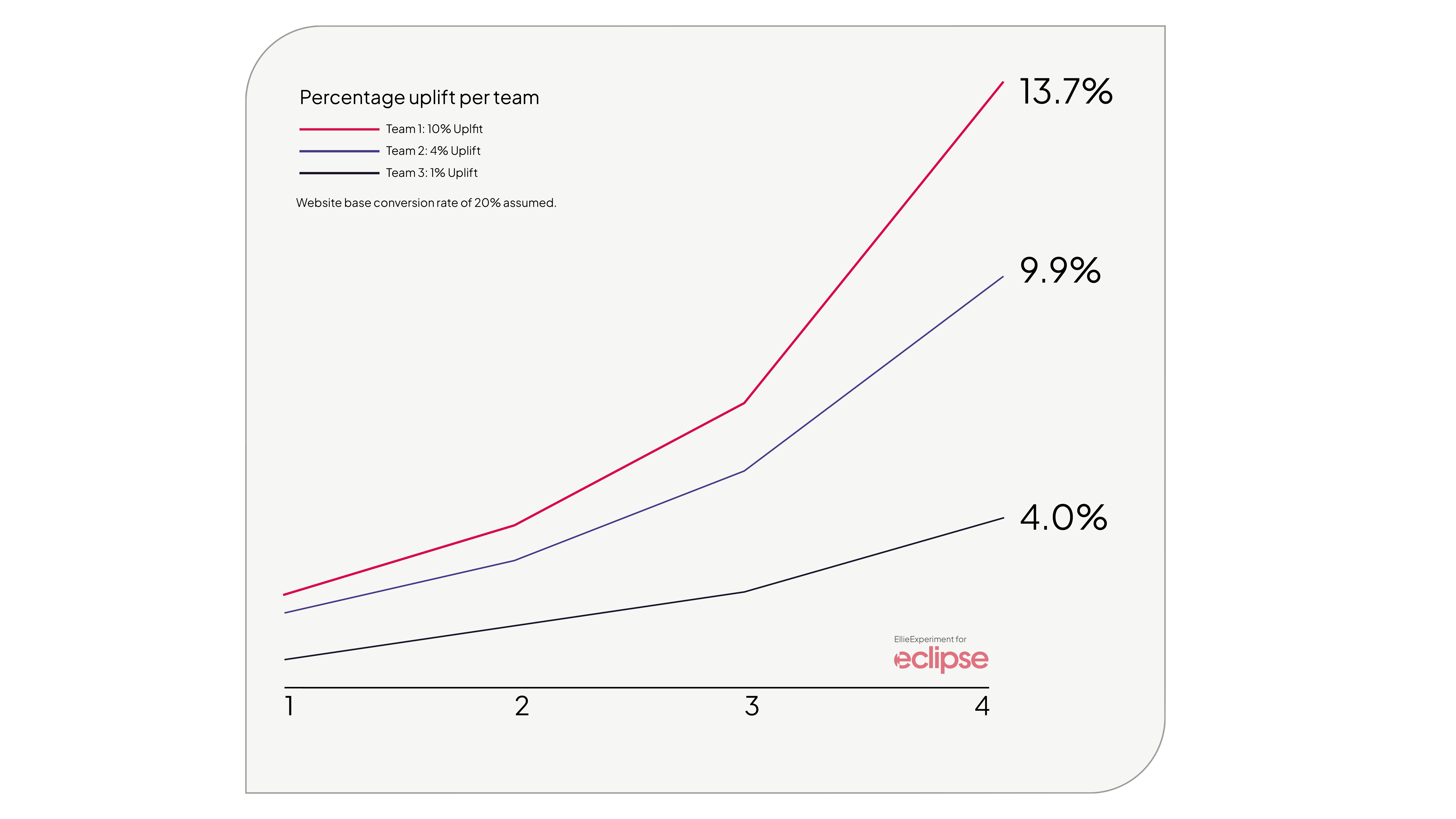

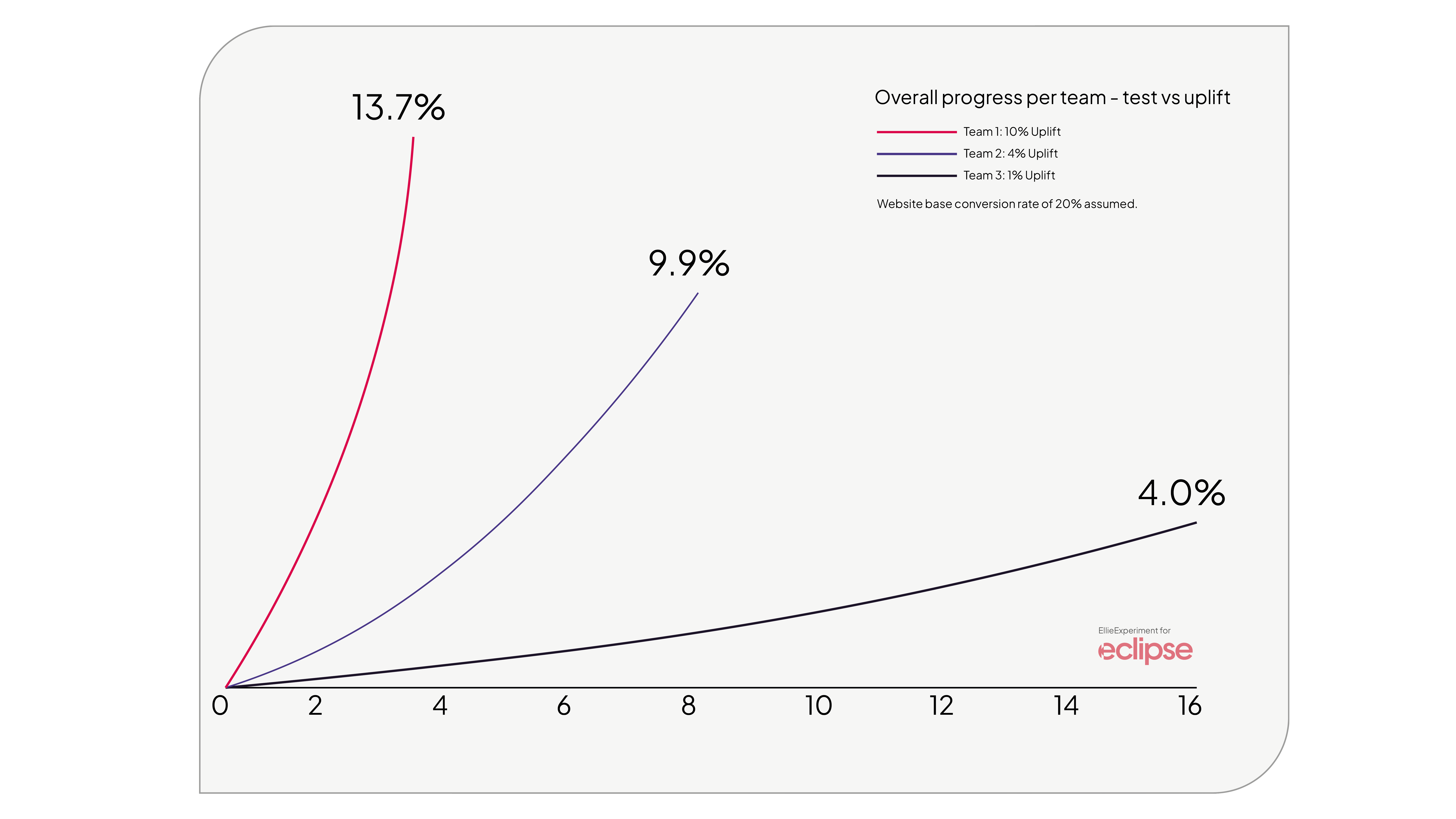

Three teams start working on different experiments, each varying in complexity. The more complex experiments typically lead to bigger uplifts or more important learnings.

But these complex tests take longer to design and build. As a result, the team running more complex experiments does fewer tests per month.

How does this impact the outcome?

Team 1: Focuses on highly complex tests, achieving a 10% uplift, but only delivers 1 experiment per month.

Team 2: Focuses on moderately complex tests, achieving a 4% uplift, and delivers 2 experiments per month.

Team 3: Focuses on low complexity tests, achieving 1% uplift, and delivers 4 experiments per month.

There is no right or wrong answer here. Each option has a trade-off. Our job is to pick the approach that best matches the needs of the business and environment we’re working in.

To understand what factors influence trade-off decisions, we asked six experimentation folk what trade-offs they would be most willing to make and why. The options provided varied in terms of value vs. quantity:

- I would rather have high-value ideas, even if 30-50% of them fail.

- I would rather have a high win rate, even if we don't launch very many experiments.

- I would rather launch many experiments, even if we don't have much control over quality or value.

- I would rather launch more variants and increase the chance of winning per experiment, even if we don't launch any more experiments this year.

- If an experiment is not going to yield 10x results, we shouldn't do it.

Which would you pick? If you’re not sure, read on to find out what factors you need to take into consideration.

Programme Maturity

Early on in a testing program, everyone is finding their feet. Easy to build tests that get some early wins, can help build interest, iron out process issues, and start to build muscle memory. But after the initial buy-in has been earned and the pressure to get results increases, many teams switch from lower value tests with a higher win rate to higher value tests with a lower win rate.

Low-maturity testing programmes often rely on A/B testing tools to build tests, limiting the types of experiments they can run. But as maturity increases, you’ll probably get more resources allocated to your testing team. This means those big test ideas, which often require significant developer resources, are now an option.

As experimentation maturity increases, it also changes what is left to test.

Early on, simple tests tend to tackle a lot of the “low-hanging fruit,” but once these have been resolved, what’s left are bigger, meatier problems that require more time and effort to test.

Effectively, you’ve exhausted the local maximum of testable ideas, and you start to see diminishing returns from your efforts. Simply running more tests won't fix the problem, as you’re working on a highly optimised website. Instead of small iterative tests, the focus has to shift to more radical and innovative changes to achieve results.

Risk Appetite

How open is your company to risk?

If the risk appetite is low, there’s often a desire to test everything at the highest statistical level. In this situation, losing tests are often more acceptable, as they are seen as preventing revenue loss, rather than failing to deliver an uplift.

Businesses in highly regulated industries, such as health or financial industries, as well as those with high revenue (where there’s more to lose), usually have a lower risk appetite. For these businesses, the preferred trade-off is usually higher quality ideas, but with lower velocity.

Another interesting perspective that might sway your trade-off decision is around inconclusive tests. Statistically valid yet inconclusive tests are often less desirable than a big negative result.

As such, bigger test ideas with more significant changes tend to be preferred to avoid inconclusive results.

Company Goals

The overarching goals of your company will also play a large role in the trade-offs you make.

What’s important is picking a trade-off that best matches the business goals. Doing so will help you gain greater support from the C-suite.

But the type of goals can also affect the trade-offs you make. For example, if the financial target for the year is a big percentage increase on the current numbers, you’ll need ideas that have the potential to deliver the biggest value. Small incremental uplifts won’t hit your targets. It’s good to realise that these big ideas typically have lower chances of succeeding and can take longer to build, but overall, it is the right strategy for the goals you have.

Departments and Functions

Where you sit in the company will impact the trade-offs you make.

If, for example, you are in a strategic-focused role, you will be motivated to take the higher-quality ideas to help answer significant business questions. Simply knowing that customers are more likely to convert on a green button has no strategic business value. Whereas finding out which features convince customers to pay a higher price can have a massive impact on product, marketing, and sales teams.

For teams who are focused on understanding the customer, the same is true; higher-quality test ideas are more likely to generate learnings about intent, wants, and needs. In these functions, teams focus on whether an idea was validated or disproven and what it says about their audience rather than winning or losing.

Given the disruption nearly all industries are facing due to technological and economic changes, innovation has never been higher on the C-suite agenda. If innovation is essential, then the “high-value ideas, even if 30-50% of them fail”, option is a no-brainer.

What trade-off are you willing to make?

Understanding your business environment can help inform which trade-offs are right for your experimentation programme and help to guide what you test.

To learn more, check out my talk and video where I propose a value vs. velocity model to help businesses decide what to prioritise or get in touch with me via the coaching button below to discuss how we can build an experimentation programme that matches your business needs.

.png)