The Testing Backlog: Where Ideas Go to Die

Some of the most common experimentation practices used today are based on misconceptions. Many found their way into A/B testing literature and have been repeated so many times that most people take them as gospel.

In this six-part series on product experimentation fallacies, I’ll bust common misconceptions that so many teams are falling foul of, and offer alternative approaches that can be used instead.

In this series, I’ll cover:

• Three prioritisation and planning fallacies.

• Three statistical fallacies.

• Two experiment design fallacies.

Up first, an ideation fallacy that nearly all testing teams fall for: the test backlog.

Product Testing Misconception #1: The Test Backlog

Ask around, and you’ll find that most experimentation teams create a backlog of all possible ideas, prioritise them and then execute. Teams often think it's easier to prioritise the "best" idea when there are more ideas to start with.

The truth

- You are never going to test all (or even most) of the ideas in your test backlog.

- Your priorities will change in the next month, quarter, or year. By the time you finish prioritising and getting evidence for a long list of ideas, your priorities might have changed.

- Your backlog might need to change every time you get results from a new experiment.

The "Master Experimentation Backlog" is, in reality, performative theatre for Product Managers. It’s a pervasive and damaging ritual that wastes so much time and effort.

If you’re in one of the few teams that don’t use testing backlogs, it’s effectively a large Google Sheet or bloated Jira board containing 100s of potential A/B test ideas, meticulously tagged by "effort" and "impact", often using a scoring framework like ICE or PIE. Experimentation teams treat these lists like sacred artefacts, operating under the illusion that if you gather enough hay, the needle will eventually present itself. They believe that the path to a "great" program is paved with a high volume of choices, and that the more ideas you have to prioritise, the better the "best" idea will be.

The uncomfortable truth that makes the "process-first" consultants squirm is that a massive, well-prioritised backlog is not the road to success. It’s a bureaucratic security blanket that helps you avoid difficult conversations.

Here’s the reality of test backlogs that the "Best Practice" pushers won’t tell you.

1. You’re maintaining a graveyard

Most of the ideas in your test backlog will never see the light of day.

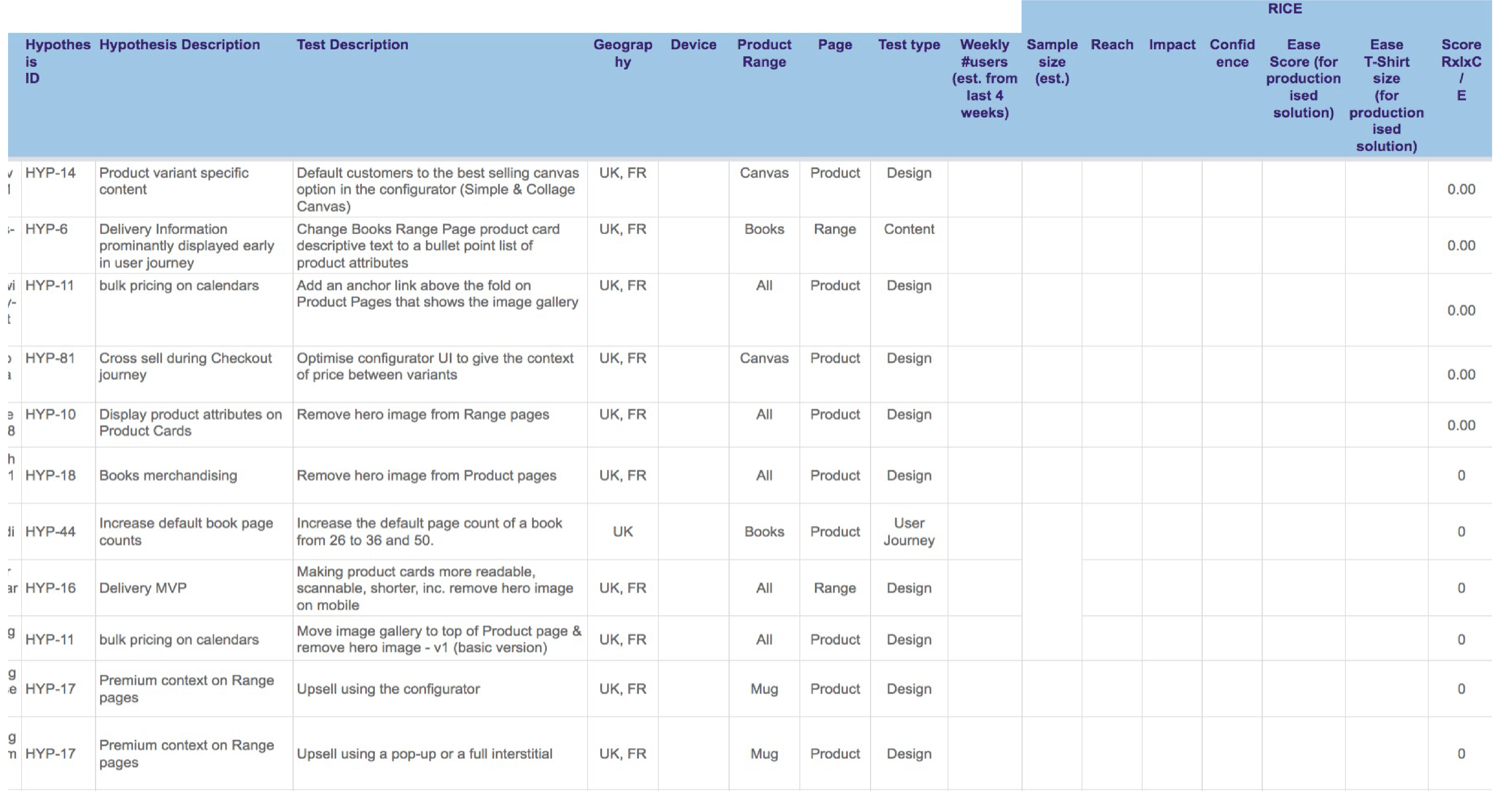

Take a look at this ideas backlog I received when I first joined Photobox. Everyone was allowed to enter their ideas into the spreadsheet.

As you can see on the right, no one ever filled in the RICE framework because by the time they had gone from the first spreadsheet to the third spreadsheet, things had changed so much that there was no point in prioritising any of these ideas. To give you an idea of scale, the third spreadsheet had around 300 ideas in it.

A backlog of this magnitude isn't a "pipeline"; it’s a list of things you’ve already decided aren't important enough to do, but you're too emotionally attached to delete.

Optimizely data shows that the median number of tests run annually is just 34. That means, if you have a backlog of 150 ideas, 78% of them will not get tested. How much time and effort are you willing to put into documenting ideas that will never get tested?

2. Ideas have a short shelf life

Even if you were to test everything on your backlog, the likelihood is that by the time you reach row 50, the market has moved, your tech stack has evolved, and half of your assumptions have been proven wrong.

Company and team priorities can change when a competitor launches a new feature, when the board shifts the OKRs, when your lead engineer finds a massive bug or when global events shift public opinion.

By the time you finish the gruelling task of prioritising row 87 against row 42, the environment around you has already changed. You are wasting time on the high-fidelity planning of low-certainty futures.

3. New data can render your backlog obsolete

Most teams understand that experimentation is a feedback loop, not a linear process. Every time you finish a test, you learn something about your user.

If your experiment on the checkout flow fails, it will likely alter what you test next. But if you are wedded to a static, pre-prioritised backlog of 100 "checkout tweaks," you are ignoring the very data you claim to value. Your backlog is out of date the second a new result hits the dashboard.

How to create a better testing backlog

The solution is to continuously collect data.

I know a lot of people talk about continuous discovery, but few do it in reality. New ideas have to be supported by evidence. This step alone can help cut an excessively long list quickly when quality evidence is required.

Start by looking at the backlog of ideas and disregard those that you:

- Don't have evidence for.

- Are too small.

- Are unlikely to have enough impact.

One of the things that you can do is use what's called evidence scores.

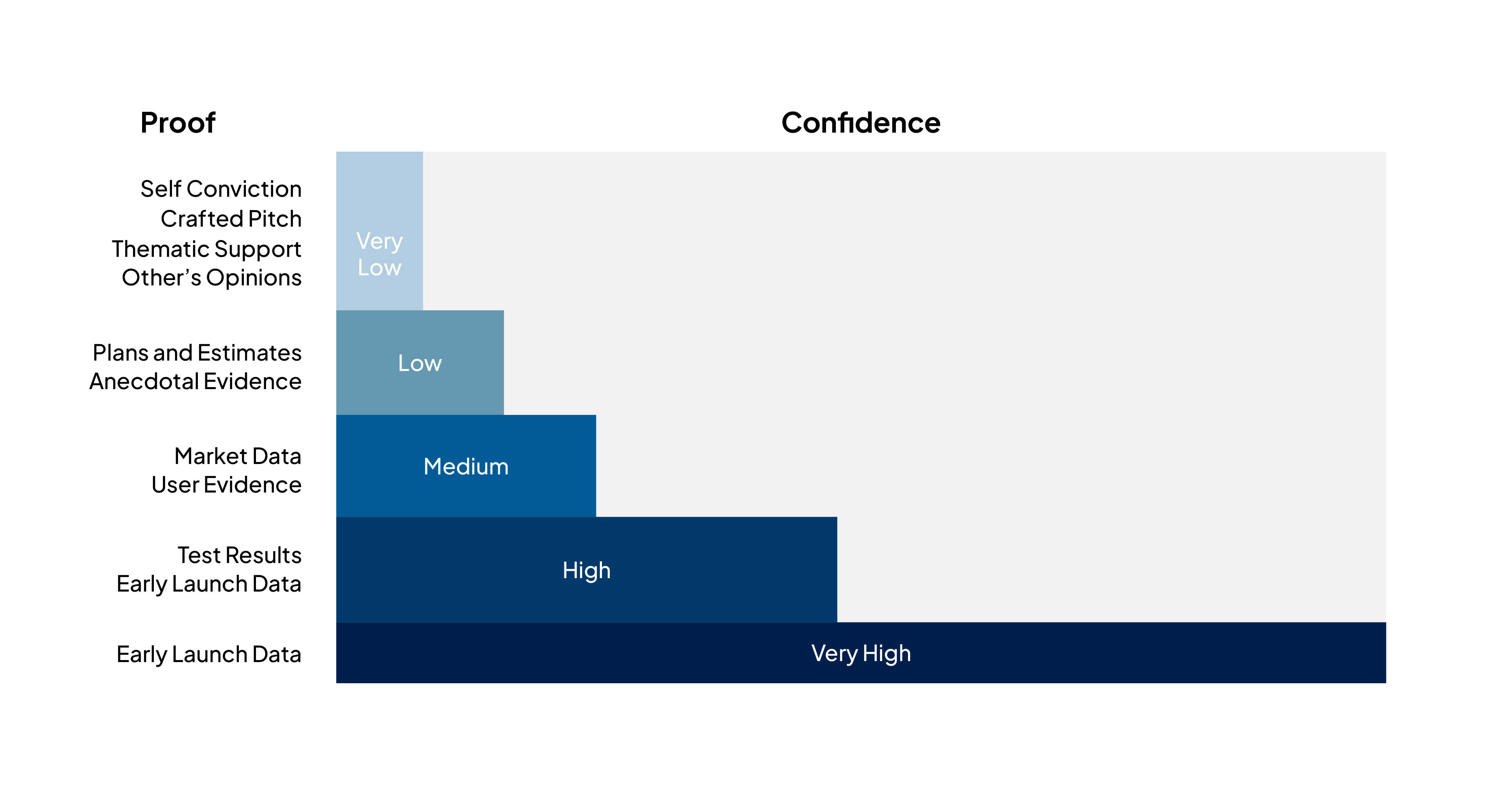

This acid test for ideas is a fairly common concept, but Itamar Gilad has written it up in a really effective way. In the graph below, Itamar mapped out different sources of “proof” from self-conviction, which has a very low level of confidence, all the way through to late launch data (like statistically valid test results).

The level of investment in an idea should always stay proportional to the level of confidence in it. This is because ideas that arise from A/B test results, for example, have a much higher likelihood of having an impact than a blue-sky idea your boss suggested that hasn’t shown up in any form of research.

Summary

Spend more time on customer research and data analysis. Spend less time on backlog grooming or trying to keep a big backlog up-to-date.

Only add ideas which have a high confidence level.

Keep the number of test ideas realistic (relative to how many tests you run per year). Listing out 100s of ideas just creates more work and less clarity.

Test results should be used to refine the backlog as a feedback loop.

To learn more about a data and research approach to test ideation, connect below or watch my talk about The Fallacies of AB testing where I explain all the ideation fallacies and provide a bunch of resources to help you.